Stop Cluttering Your Codebase with Brittle Generated Tests

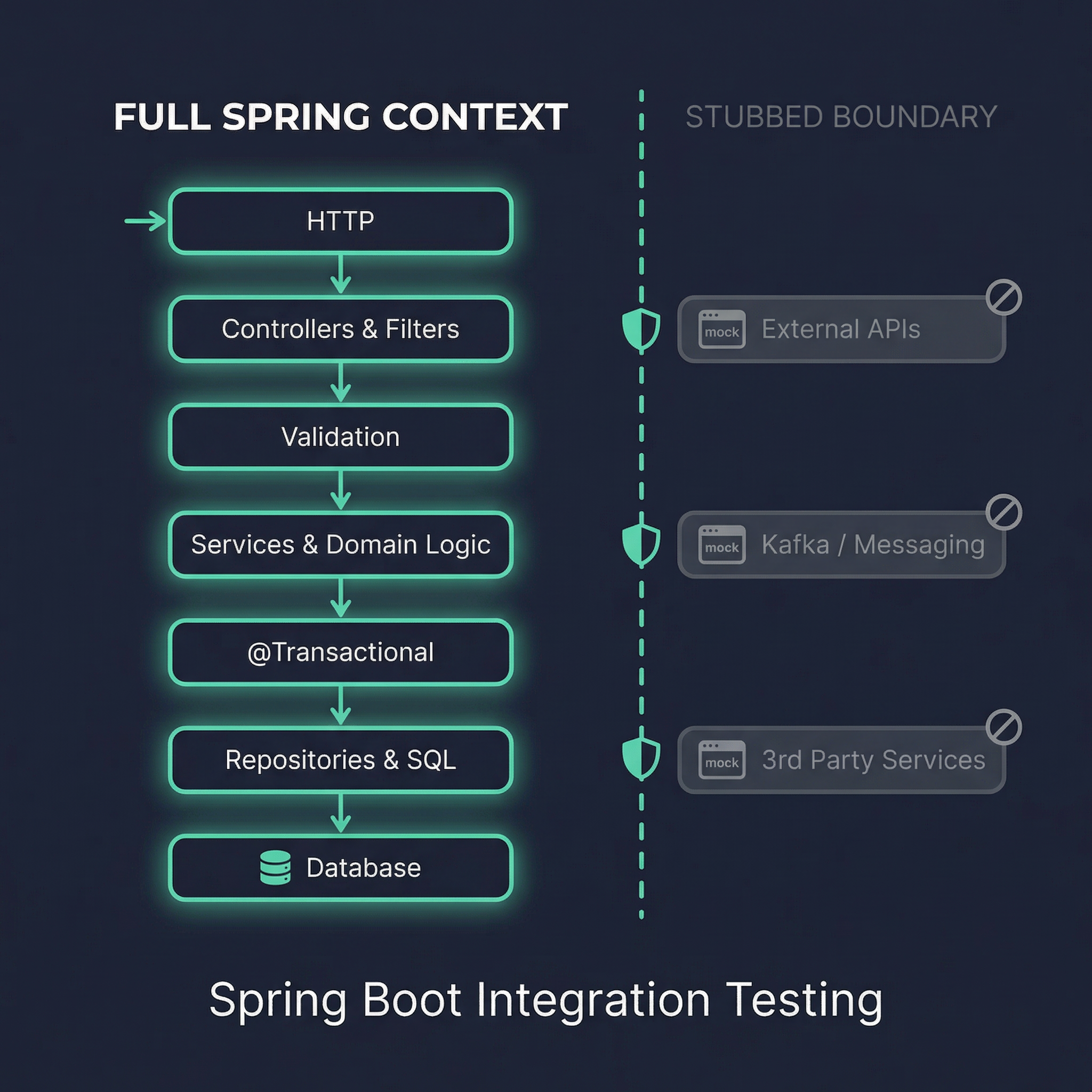

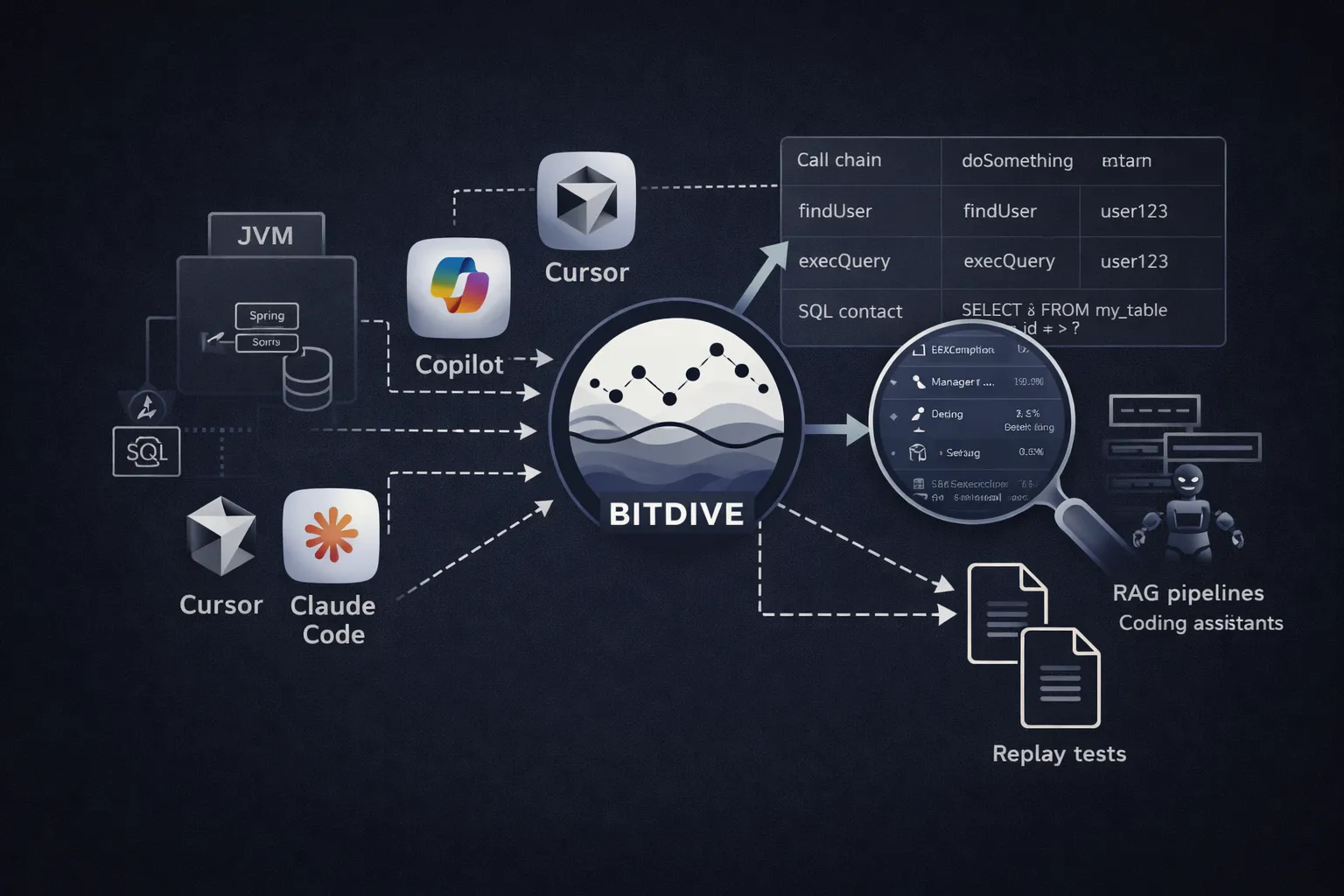

TL;DR: In the industry, there is a weird habit: if a tool can generate tests, it is considered automatically useful. If you have 300 new .java files in your repo after recording a scenario, the team assumes they have "more quality." They are wrong. Automated test generation often turns into a source of engineering pain, cluttering repositories and burying real regressions in noise. There is a more mature path: capture real execution traces, store them as data, and replay them dynamically.