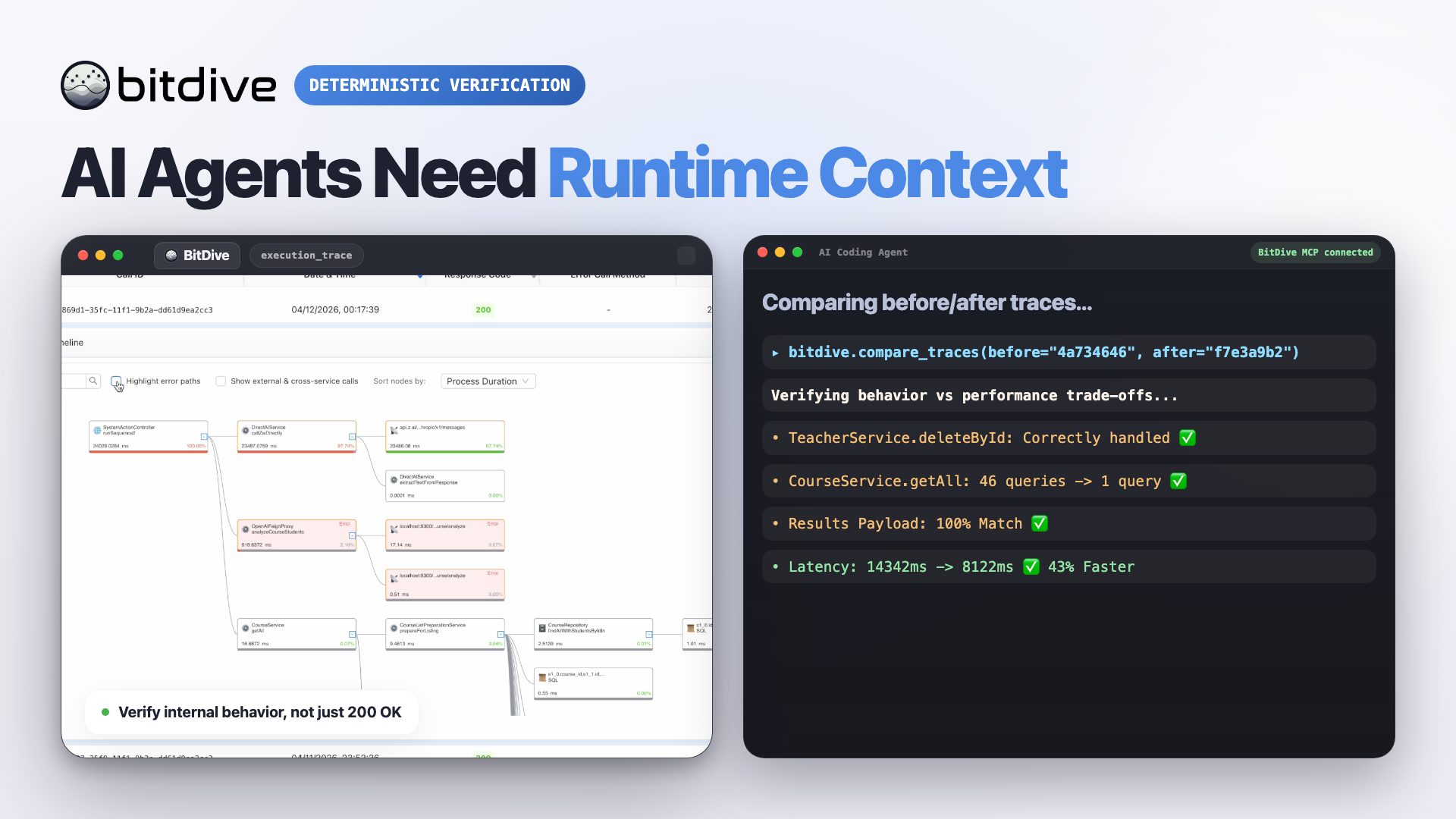

Autonomous Verification Layer for AI-Driven Code Changes

Next baseline

Establish Behavioral Ground Truth

Capture real Java runtime data—traces, SQL, and method calls—to provide AI agents with a deterministic baseline of existing behavior.

AI-Agent Runtime Context

Give Cursor, Claude, or Devin the exact runtime context they need to make precise code changes grounded in reality, not static assumptions.

Deterministic Proof of Correctness

Verify AI-generated code by comparing before vs. after traces. Automatically catch side effects, SQL drift, and performance regressions.

Autonomous Regression Memory

Lock in verified logic as autonomous JUnit replay suites with zero token usage. Proved behavior stays protected in your CI/CD pipeline.

Watch the Verification Workflow in Action

Deploy BitDive Self-Hosted in One Command

docker run -d --privileged -p 443:443 -p 8089:8089 --name bitdive-launcher frolikoveabitdive/bitdive-launchernpx skills add bitDive/bitdive-skills --skill '*'One Runtime Trace, Full Execution Context for Code Verification

A single runtime snapshot becomes the baseline for debugging, AI reasoning, and deterministic regression replay.

Instead of reconstructing state from logs, BitDive captures the real execution surface that matters to verification.

- HTTP request payloads and headers

- Execution tree with timings

- Method arguments and return values

- Database queries with results

- REST requests and responses

- Kafka publishes and consumed messages

- Exception details and failure paths

Deterministic JUnit Replay Tests from Runtime Traces

Real executions become standard JUnit replay tests with virtualized boundaries and zero manual mock setup.

BitDive does not ask an LLM to invent tests. It records what the application actually did and replays that behavior as runnable regression assets.

- Runtime-grounded: replay suites are built from real application behavior, not imagined scenarios.

- Boundary virtualization: databases, REST calls, and Kafka interactions are isolated directly in the JVM.

- Standard output: recorded suites remain ordinary JUnit that runs via

mvn test.

Runtime Context and Trace Comparison for AI Agents

The agent does not jump straight from prompt to patch. It moves through baseline, change, proof, and regression management.

Grounding AI in Ground Truth

Before letting an AI agent touch the code, establish a behavioral baseline using real runtime evidence.

- Identify the exact user flow or API endpoint the AI agent needs to modify.

- Capture a runtime snapshot to see the real logic: SQL queries, method arguments, and downstream dependencies.

- Give the AI agent this trace via MCP to eliminate hallucinations about how the code actually works.

Context-Aware Implementation

The AI agent makes precise changes based on runtime truth, not just static code analysis.

- The agent uses captured inputs and mock behaviors to scope the change exactly where it matters.

- Avoid large, risky refactors by isolating the logic that actually needs fixing based on the trace.

- The implementation is informed by real-world data payloads and system interactions.

Deterministic Proof of Correctness

The agent proves the change works by comparing the new execution trace against the baseline.

- Capture a new trace after the AI agent applies the fix.

- Perform automated behavior comparison: did the SQL change? Are there extra HTTP calls? Is the performance degraded?

- Eliminate manual review fatigue by letting BitDive highlight the exact behavioral differences.

Locking in Verified Behavior

Once proven, the verified behavior is automatically preserved as a deterministic JUnit regression test.

- Refresh the regression baseline from the verified successful execution.

- Turn the AI agent’s successful work into a zero-token JUnit suite for your CI pipeline.

- Scale your development without fear: every AI-driven change is protected by runtime-backed proof.

How Deterministic Verification Reduces Engineering and Testing Costs

Strategic resource recovery across the entire development lifecycle, from AI token consumption to human engineering hours.

- Zero token usage to create deterministic tests from captured executions

- Zero token usage to refresh tests after a verified change

- No AI-written test logic to debug or rewrite

- Fewer AI agent iterations due to precise runtime context

- Less mock work because dependencies are auto-mocked from reality

- Reduced cloud costs via efficient code (no N+1, no duplicate requests)

- Less time preparing context for AI tools

- Test suites created in minutes instead of by hand

- Significantly faster root-cause analysis

- Less manual mock setup with automatic dependency virtualization

- Fewer production incidents through deterministic verification

- Better performance visibility for regressions, redundant calls, and N+1

Production-Safe Runtime Capture for Java

Fast setup. Low overhead. Stable replay.

Fast Java Agent Setup

Add the dependency and start capturing. No code changes.

Low Overhead Tracing

Built for high-load Java services with binary capture and compression.

Auto-Masking PII

Pre-defined rules for sensitive data. Masking happens before capture.

Local-First Privacy

Data stays in your infrastructure. No external cloud required for capture.

Zero-Trust Security

Auth, granular access control, and full encryption for data both at rest and in transit.

Autonomous Boundary Virtualization

Autonomous replay of complex flows without booting the whole environment.

Verify Code Changes with Runtime Evidence

Ground every change in runtime evidence, prove it with trace comparison, and keep the result as deterministic regression memory.